Disable Upload Option in Ambari Files View

This chapter is about managing HDFS storage with HDFS shell commands. You'll too acquire about the dfsadmin utility, a key ally in managing HDFS. The chapter also shows how to manage HDFS file permissions and create HDFS users. Every bit a Hadoop administrator, one of your central tasks is to manage HDFS storage. The chapter shows how to check HDFS usage and how to allocate infinite quotas to HDFS users. The chapter also discusses when and how to rebalance HDFS data, too as how yous tin reclaim HDFS infinite.

This chapter is from the book

Working with HDFS is i of the almost mutual tasks for someone administering a Hadoop cluster. Although you tin can admission HDFS in multiple means, the command line is the most common manner to administer HDFS storage.

Managing HDFS users by granting them advisable permissions and allocating HDFS infinite quotas to users are some of the common user-related administrative tasks y'all'll perform on a regular basis. The chapter shows how HDFS permissions work and how to grant and revoke infinite quotas on HDFS directories.

Besides the management of users and their HDFS space quotas, there are other aspects of HDFS that you need to manage. This affiliate also shows how to perform maintenance tasks such as periodically balancing the HDFS data to distribute information technology evenly across the cluster, also as how to proceeds boosted space in HDFS when necessary.

Managing HDFS through the HDFS Shell Commands

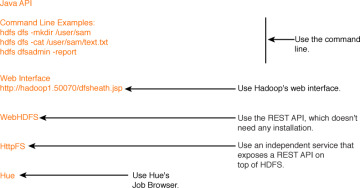

You lot can access HDFS in various means:

-

From the command line using simple Linux-like file arrangement commands, likewise as through a spider web interface, called WebHDFS

-

Using the HttpFS gateway to access HDFS from behind a firewall

-

Through Hue's File Browser (and Cloudera Manager and Ambari, if you're using Cloudera, or Hortonwork's Hadoop distributions)

Figure nine.ane summarizes the various ways in which you lot tin can access HDFS. Although you accept multiple ways to admission HDFS, it's a good bet that you'll often be working from the command line to manage your HDFS files and directories. Y'all can access the HDFS file arrangement from the command line with the hdfs dfs file system commands.

Figure 9.1 The many ways in which you can access HDFS

Using the hdfs dfs Utility to Manage HDFS

You use the hdfs dfs utility to consequence HDFS commands in Hadoop. Here'south the usage of this control:

hdfs dfs [GENERIC_OPTIONS] [COMMAND_OPTIONS]

Using the hdfs dfs utility, y'all can run file organisation commands on the file organization supported in Hadoop, which happens to exist HDFS.

Y'all can use two types of HDFS beat commands:

-

The starting time prepare of shell commands are very similar to common Linux file system commands such every bit ls, mkdir and then on.

-

The 2nd fix of HDFS shell commands are specific to HDFS, such equally the control that lets you ready the file replication factor.

You lot can admission the HDFS file organization from the command line, over the spider web, or through application code. HDFS file organization commands are in many cases quite like to familiar Linux file organisation commands. For instance, the command hdfs dfs –cat /path/to/hdfs/file works the same as a Linux cat command, by printing the output of a file onto the screen.

Internally HDFS uses a pretty sophisticated algorithm for its file system reads and writes, in social club to support both reliability and high throughput. For example, when you lot issue a elementary put command that writes a file to an HDFS directory, Hadoop volition need to write that information fast to three nodes (by default).

You can access the HDFS shell by typing hdfs dfs <command> at the command line. Yous specify actions with subcommands that are prefixed with a minus (-) sign, as in dfs –cat for displaying a file's contents.

Yous may view all available HDFS commands by but invoking the hdfs dfs command with no options, as shown here:

$ hdfs dfs Usage: hadoop fs [generic options] [-appendToFile <localsrc> ... <dst>] [-cat [-ignoreCrc] <src> ...]

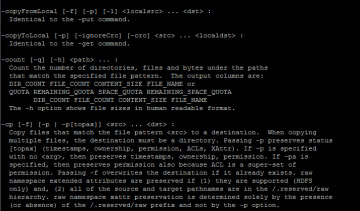

Figure nine.ii shows all the available HDFS dfs commands.

Nevertheless, it's the hdfs dfs –assist control that's truly useful to a beginner and even quite a few "experts"—this command conspicuously explains all the hdfs dfs commands. Effigy 9.3 shows how the aid utility conspicuously explains the various file re-create options that you tin can use with the hdfs dfs command.

Figure nine.3 How the hdfs dfs –help command helps yous understand the syntax of the diverse options of the hdfs dfs command

In the following sections, I show yous how to

-

List HDFS files and directories

-

Use the HDFS STAT control

-

Create an HDFS directory

-

Remove HDFS files and directories

-

Change file and directory ownership

-

Change HDFS file permissions

Listing HDFS Files and Directories

As with regular Linux file systems, employ the ls command to listing HDFS files. You lot tin can specify various options with the ls command, equally shown hither:

$ hdfs dfs -usage ls Usage: hadoop fs [generic options] -ls [-d] [-h] [-R] [<path> ...] bash-4.two$ Here's what the options stand for: -d: Directories are listed as plain files. -h: Format file sizes in a man-readable fashion (eg 64.0m instead of 67108864). -R: Recursively listing subdirectories encountered. -t: Sort output by modification time (nigh recent start). -S: Sort output by file size. -r: Opposite the sort order. -u: Employ access time rather than modification time for display and sorting.

Listing Both Files and Directories

If the target of the ls command is a file, information technology shows the statistics for the file, and if information technology'south a directory, it lists the contents of that directory. You can utilise the post-obit control to go a directory listing of the HDFS root directory:

$ hdfs dfs –ls / Institute 8 items drwxr-xr-10 - hdfs hdfs 0 2013-12-11 09:09 /data drwxr-xr-ten - hdfs supergroup 0 2015-05-04 13:22 /lost+found drwxrwxrwt - hdfs hdfs 0 2015-05-20 07:49 /tmp drwxr-xr-x - hdfs supergroup 0 2015-05-07 xiv:38 /user ... #

For example, the following command shows all files within a directory ordered by filenames:

$ hdfs dfs -ls /user/hadoop/testdir1

Alternately, y'all tin can specify the HDFS URI when listing files:

$ hdfs dfs –ls hdfs://<hostname>:9000/user/hdfs/dir1/

You lot can also specify multiple files or directories with the ls command:

$ hdfs dfs -ls /user/hadoop/testdir1 /user/hadoop/testdir2

List But Directories

You can view information that pertains just to directories by passing the –d option:

$ hdfs dfs -ls -d /user/alapati drwxr-xr-ten - hdfs supergroup 0 2015-05-20 12:27 /user/alapati $

The post-obit two ls command examples prove file information:

$ hdfs dfs –ls /user/hadoop/testdir1/test1.txt $ hdfs dfs –ls /hdfs://<hostname>:9000/user/hadoop/dir1/

Annotation that when y'all list HDFS files, each file will show its replication factor. In this case, the file test1.txt has a replication factor of 3 (the default replication gene).

$ hdfs dfs -ls /user/alapati/ -rw-r--r-- 3 hdfs supergroup 12 2016-05-24 xv:44 /user/alapati/test.txt

Using the hdfs stat Command to Get Details about a File

Although the hdfs dfs –ls command lets yous get the file information you demand, in that location are times when you need specific bits of information from HDFS. When y'all run the hdfs dfs –ls command, it returns the complete path of the file. When you want to run across only the base proper name, you lot can use the hdfs –stat control to view merely specific details of a file.

You tin format the hdfs –stat command with the post-obit options:

%b Size of file in bytes %F Will return "file", "directory", or "symlink" depending on the blazon of inode %yard Group proper noun %n Filename %o HDFS Block size in bytes ( 128MB by default ) %r Replication gene %u Username of owner %y Formatted mtime of inode %Y UNIX Epoch mtime of inode

In the following example, I show how to ostend if a file or directory exists.

# hdfs dfs -stat "%n" /user/alapati/letters letters

If you run the hdfs –stat control confronting a directory, it tells you that the proper noun y'all specify is indeed a directory.

$ hdfs dfs -stat "%b %F %k %due north %o %r %u %y %Y" /user/alapati/test2222 0 directory supergroup test2222 0 0 hdfs 2015-08-24 20:44:11 1432500251198 $

The following examples show how you can view different types of data with the hdfs dfs –stat command when compared to the hdfs dfs –ls control. Notation that I specify all the -stat command options here.

$ hdfs dfs -ls /user/alapati/test2222/truthful.txt -rw-r--r-- ii hdfs supergroup 12 2015-08-24 xv:44 /user/alapati/test2222/ true.txt $ $ hdfs dfs -stat "%b %F %g %n %o %r %u %y %Y" /user/alapati/test2222/true.txt 12 regular file supergroup true.txt 268435456 2 hdfs 2015-05-24 xx:44:eleven 1432500251189 $

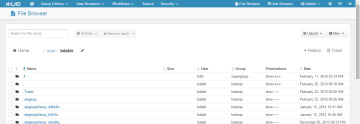

I'd exist remiss if I didn't add that you lot can also admission HDFS through Hue's Job Browser, equally shown in Figure 9.four.

Figure 9.iv Hue's File Browser, showing how you can access HDFS from Hue

Creating an HDFS Directory

Creating an HDFS directory is similar to how you lot create a directory in the Linux file system. Issue the mkdir command to create an HDFS directory. This command takes path URIs as arguments to create one or more directories, as shown here:

$ hdfs dfs -mkdir /user/hadoop/dir1 /user/hadoop/dir2

The directory /user/hadoop must already be for this command to succeed.

Here's another case that shows how to create a directory by specifying a directory with a URI.

$ hdfs dfs –mkdir hdfs://nn1.example.com/user/hadoop/dir

If you desire to create parent directories along the path, specify the –p pick, with the hdfsdfs -mkdir control, just equally you would do with its cousin, the Linux mkdir command.

$ hdfs dfs -mkdir –p /user/hadoop/dir1

In this command, by specifying the –p option, I create both the parent directory hadoop and its subdirectory dir1 with a unmarried mkdir command.

Removing HDFS Files and Directories

HDFS file and directory removal commands work similar to the analogous commands in the Linux file arrangement. The rm command with the –R option removes a directory and everything under that directory in a recursive fashion. Here's an example.

$ hdfs dfs -rm -R /user/alapati 15/05/05 12:59:54 INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = 1440 minutes, Emptier interval = 0 minutes. Moved: 'hdfs://hadoop01-ns/user/alapati' to trash at: hdfs://hadoop01-ns/user/ hdfs/.Trash/Electric current $

I issued an rm –R command, and I can verify that the directory I desire to remove is indeed gone from HDFS. However, the output of the rm –R command shows that the directory is still saved for me in example I need information technology—in HDFS's trash directory. The trash directory serves every bit a built-in safety mechanism that protects you against accidental file and directory removals. If you haven't already enabled trash, please exercise so ASAP!

Fifty-fifty when y'all enable trash, sometimes the trash interval is ready too low, then make sure that yous configure the fs.trash.interval parameter in the hdfs-site.xml file accordingly. For case, setting this parameter to xiv,400 means Hadoop volition retain the deleted items in trash for a menstruum of ten days.

Y'all tin can view the deleted HDFS files currently in the trash directory by issuing the following command:

$ hdfs dfs –ls /user/sam/.Trash

You can use the –rmdir selection to remove an empty directory:

$ hdfs dfs –rmdir /user/alapati/testdir

If the directory you wish to remove isn't empty, apply the -rm –R option as shown earlier.

If you've configured HDFS trash, any files or directories that y'all delete are moved to the trash directory and retained in in that location for the length of time you've configured for the trash directory. On some occasions, such as when a directory fills upwardly beyond the infinite quota y'all assigned for it, yous may want to permanently delete files immediately. You can do so past issuing the dfs –rm command with the –skipTrash option:

$ hdfs dfs –rm /user/alapati/examination –skipTrash

The –skipTrash option will bypass the HDFS trash facility and immediately delete the specified files or directories.

You tin empty the trash directory with the expunge command:

$ hdfs dfs –expunge

All files in trash that are older than the configured time interval are deleted when y'all effect the expunge command.

Changing File and Directory Ownership and Groups

Yous can change the owner and grouping names with the –chown command, as shown here:

$ hdfs dfs –chown sam:produsers /data/customers/names.txt

You must be a super user to modify the ownership of files and directories.

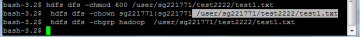

HDFS file permissions piece of work very similar to the way you alter file and directory permissions in Linux. Figure ix.five shows how to effect the familiar chmod, chown and chgrp commands in HDFS.

Effigy nine.five Changing file manner, ownership and group with HDFS commands

Changing Groups

You can alter just the group of a user with the chgrp command, as shown here:

$ sudo –u hdfs hdfs dfs –chgrp marketing /users/sales/markets.txt

Changing HDFS File Permissions

Y'all can use the chmod command to change the permissions of a file or directory. You can use standard Linux file permissions. Here's the general syntax for using the chmod command:

hdfs dfs –chmod [-R] <manner> <file/dir>

You lot must be a super user or the possessor of a file or directory to change its permissions.

With the chgrp, chmod and chown commands yous can specify the –R choice to brand recursive changes through the directory construction y'all specify.

In this section, I'm using HDFS commands from the command line to view and dispense HDFS files and directories. However, there'southward an even easier way to access HDFS, and that'south through Hue, the web-based interface, which is extremely easy to use and which lets you perform HDFS operations through a GUI. Hue comes with a File Browser awarding that lets you lot list and create files and directories, download and upload files from HDFS and copy/move files. Y'all can also use Hue'southward File Browser to view the output of your MapReduce jobs, Hive queries and Pig scripts.

While the hdfs dfs utility lets you manage the HDFS files and directories, the hdfs dfsadmin utility lets you perform key HDFS administrative tasks. In the next section, y'all'll learn how to work with the dfsadmin utility to manage your cluster.

christensentorned.blogspot.com

Source: https://www.informit.com/articles/article.aspx?p=2755708

0 Response to "Disable Upload Option in Ambari Files View"

ارسال یک نظر